The healthcare industry is rapidly embracing digital transformation, and Electronic Health Record (EHR) systems have become the backbone of modern patient care. Hospitals, clinics, private practices, and healthcare networks rely on EHR platforms to streamline clinical workflows, improve patient outcomes, and maintain accurate medical records.

However, developing an EHR solution is far more complex than building a standard software application. Healthcare organizations must comply with strict regulations, particularly HIPAA (Health Insurance Portability and Accountability Act), which governs the security and privacy of patient data in the United States. As a result, building a HIPAA-compliant EHR system requires substantial investment in technology, security, compliance, and ongoing maintenance.

This guide explores the key factors that influence development costs, cost breakdowns, industry trends, challenges, and strategies to help healthcare organizations make informed decisions.

Understanding HIPAA-Compliant EHR Systems

A HIPAA-compliant EHR system is a digital platform designed to securely collect, store, manage, and share patient health information while adhering to federal privacy and security regulations.

These systems typically include:

- Patient record management

- Appointment scheduling

- Prescription management

- Clinical documentation

- Billing and insurance integration

- Laboratory information management

- Secure messaging

- Telehealth capabilities

- Analytics and reporting

Unlike traditional software, healthcare applications must prioritize data protection, access controls, encryption, audit trails, and compliance monitoring.

Why Healthcare Organizations Are Investing in EHR Systems

Healthcare providers are increasingly adopting EHR solutions because they offer significant operational and clinical benefits.

Improved Patient Care

Medical professionals gain instant access to comprehensive patient histories, enabling faster diagnoses and more informed treatment decisions.

Enhanced Operational Efficiency

Automated workflows reduce paperwork, minimize administrative burdens, and improve staff productivity.

Better Regulatory Compliance

Modern EHR platforms simplify documentation processes and help healthcare organizations meet federal and state regulations.

Increased Data Accuracy

Digital records eliminate many of the errors associated with paper-based documentation and fragmented systems.

According to industry reports, healthcare organizations that implement advanced EHR systems often experience improved care coordination and measurable reductions in administrative costs.

Key Factors Affecting EHR Development Costs

The cost of building a HIPAA-compliant EHR system varies significantly depending on project scope, functionality, and technical requirements.

1. System Complexity

The complexity of the platform is one of the biggest cost drivers.

A basic EHR solution may include:

- Patient profiles

- Appointment scheduling

- Medical history management

- Basic reporting

An enterprise-level system may additionally support:

- AI-powered diagnostics

- Predictive analytics

- Telemedicine features

- Multi-location management

- Real-time data synchronization

- Interoperability with third-party healthcare systems

The more sophisticated the system, the higher the development cost.

2. HIPAA Compliance Requirements

HIPAA compliance is not simply an add-on feature. It affects every aspect of system design and development.

Compliance requirements include:

- End-to-end encryption

- Multi-factor authentication

- Access control mechanisms

- Audit logging

- Data backup systems

- Disaster recovery planning

- Risk assessments

- Secure cloud infrastructure

Implementing these security measures increases both development and testing costs but is essential for avoiding costly penalties and data breaches.

3. User Roles and Permissions

Healthcare organizations often require multiple user roles, such as:

- Physicians

- Nurses

- Administrators

- Pharmacists

- Laboratory staff

- Patients

Each role requires customized permissions and secure access controls, adding complexity to development.

4. Integration Requirements

Modern EHR systems rarely operate independently.

Many healthcare providers require integrations with:

- Laboratory systems

- Pharmacy platforms

- Insurance providers

- Billing software

- Medical devices

- Government healthcare databases

Developing and maintaining these integrations can significantly impact project budgets.

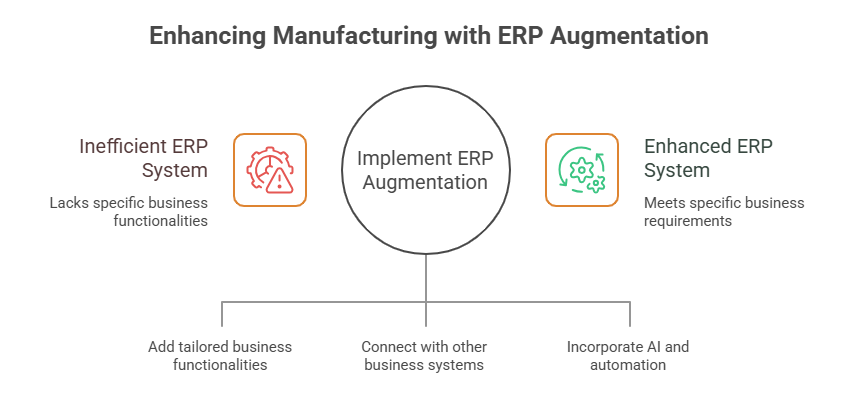

5. Telehealth Functionality

The rise of virtual healthcare has increased demand for integrated telemedicine capabilities.

Many organizations partner with a specialized Telemedicine App Development Company to implement features such as:

- Video consultations

- Secure messaging

- Remote patient monitoring

- Digital prescriptions

- Virtual appointment scheduling

Adding telehealth functionality can increase overall development costs but significantly enhances patient accessibility and engagement.

Estimated Cost Breakdown of HIPAA-Compliant EHR Development

While every project is unique, the following estimates provide a general cost range.

Basic EHR System

Estimated Cost: $50,000 – $120,000

Features:

- Patient records

- Scheduling

- Basic reporting

- User authentication

- Limited integrations

Suitable for:

- Small clinics

- Independent practices

- Specialty healthcare providers

Mid-Level EHR System

Estimated Cost: $120,000 – $300,000

Features:

- Advanced reporting

- Billing integration

- Patient portal

- Secure communication

- Compliance monitoring

Suitable for:

- Multi-provider clinics

- Regional healthcare centers

Enterprise EHR System

Estimated Cost: $300,000 – $1,000,000+

Features:

- AI-driven analytics

- Interoperability support

- Telemedicine integration

- Population health management

- Advanced cybersecurity measures

Suitable for:

- Hospital networks

- Healthcare enterprises

- Large medical organizations

Development Team Costs

Building a HIPAA-compliant EHR platform requires a multidisciplinary team.

Typical team members include:

| Role | Estimated Hourly Rate |

| Business Analyst | $40–$100 |

| UI/UX Designer | $30–$90 |

| Frontend Developer | $40–$120 |

| Backend Developer | $50–$150 |

| QA Engineer | $30–$100 |

| DevOps Engineer | $50–$150 |

| Security Specialist | $80–$200 |

| Project Manager | $50–$150 |

The location and expertise of the development team can significantly influence project costs.

Many healthcare organizations collaborate with a trusted Healthcare Software Development Company in USA to ensure compliance expertise, local regulatory knowledge, and high-quality development standards.

Hidden Costs Many Organizations Overlook

When budgeting for EHR development, businesses often focus only on initial development expenses.

However, additional costs include:

Compliance Audits

Regular audits help ensure ongoing HIPAA compliance and identify potential vulnerabilities.

Cloud Infrastructure

Secure cloud hosting requires:

- Data encryption

- Backup services

- Redundancy mechanisms

- Compliance-certified environments

Employee Training

Healthcare staff must be trained to use the platform effectively while following security protocols.

Maintenance and Updates

Healthcare regulations evolve continuously, requiring regular software updates and compliance adjustments.

Annual maintenance typically costs 15% to 25% of the original development budget.

Industry Trends Influencing EHR Development Costs

Healthcare technology is evolving rapidly, introducing new opportunities and cost considerations.

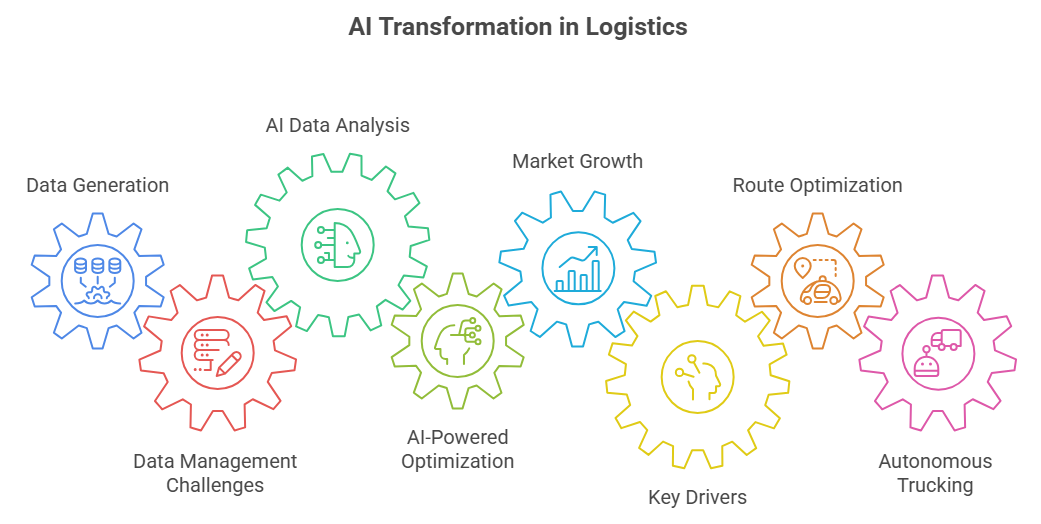

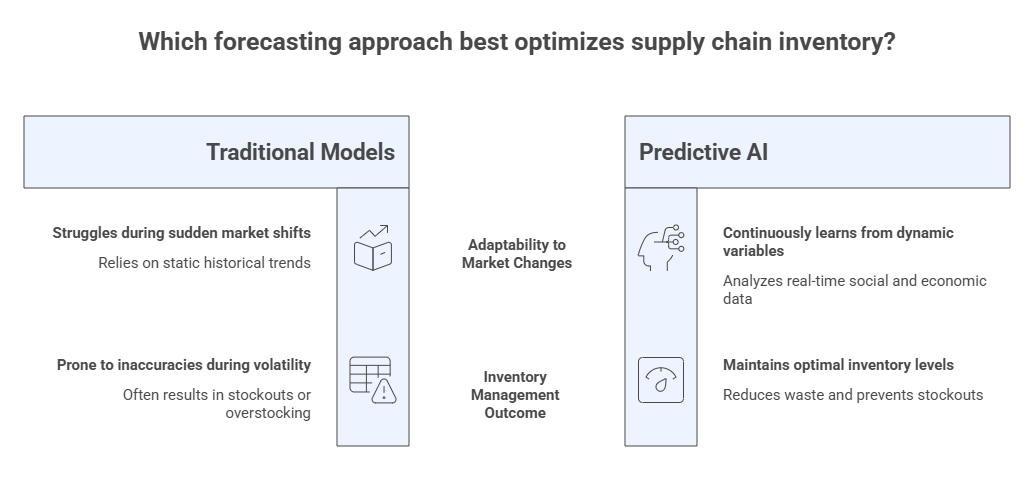

Artificial Intelligence Integration

AI is increasingly being used for:

- Clinical decision support

- Predictive analytics

- Medical imaging analysis

- Automated documentation

Although AI capabilities increase development costs, they often deliver significant long-term value.

Cloud-Based EHR Solutions

Cloud adoption continues to grow because it offers:

- Scalability

- Remote accessibility

- Lower infrastructure costs

- Faster deployment

Cloud-native systems are becoming the preferred choice for healthcare providers.

Interoperability Standards

Healthcare organizations increasingly require compliance with standards such as:

- HL7

- FHIR

- DICOM

Supporting interoperability improves data sharing but adds development complexity.

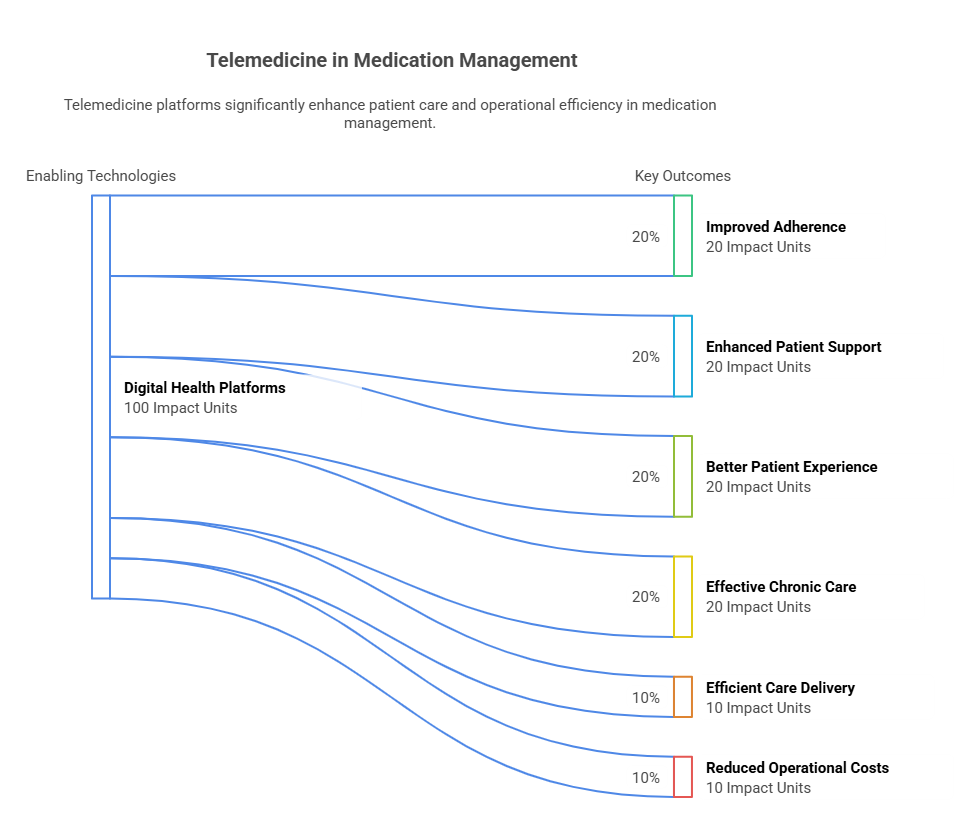

Remote Healthcare Expansion

As virtual care becomes mainstream, organizations are investing in telehealth-enabled EHR platforms. Businesses researching a comprehensive Guide to Telemedicine Software Development often discover that integrating telehealth directly into EHR systems improves both provider efficiency and patient satisfaction.

Common Challenges in EHR Development

Despite the benefits, EHR development presents several challenges.

Data Security Risks

Healthcare data remains one of the most targeted assets for cybercriminals.

Organizations must implement:

- Encryption

- Intrusion detection systems

- Continuous monitoring

- Secure authentication methods

Regulatory Compliance

HIPAA requirements are extensive and constantly evolving.

Failure to comply can result in:

- Financial penalties

- Legal consequences

- Reputational damage

User Adoption

Healthcare professionals often resist complicated software.

Successful EHR systems prioritize:

- Intuitive interfaces

- Minimal learning curves

- Workflow optimization

System Integration Complexity

Healthcare ecosystems involve numerous third-party applications and devices.

Ensuring seamless interoperability requires careful planning and experienced development teams.

Real-World Use Case: Hospital Network Modernization

A regional hospital network operating across multiple locations struggled with fragmented patient data and outdated record systems.

The organization invested approximately $650,000 in a HIPAA-compliant EHR platform that included:

- Centralized patient records

- Telehealth functionality

- Secure physician communication

- Billing automation

- Advanced analytics

Within two years, the hospital reported:

- Reduced administrative workload

- Faster patient onboarding

- Improved compliance management

- Better patient engagement

- Increased operational efficiency

Although the initial investment was substantial, the long-term ROI significantly outweighed the development costs.

How to Reduce EHR Development Costs Without Compromising Compliance

Organizations can manage expenses through strategic planning.

Start with an MVP

Launching a minimum viable product allows healthcare providers to validate requirements before investing in advanced functionality.

Prioritize Essential Features

Focus first on:

- Patient records

- Scheduling

- Compliance requirements

- Security infrastructure

Additional features can be added later.

Use Scalable Architecture

A scalable system reduces future redevelopment costs and supports organizational growth.

Choose an Experienced Development Partner

Experienced healthcare technology providers understand compliance requirements and can prevent costly mistakes during development.

Measuring ROI of an EHR System

While development costs can be significant, the return on investment is often substantial.

Benefits include:

- Reduced paperwork

- Improved workflow efficiency

- Better patient outcomes

- Enhanced compliance

- Increased revenue cycle performance

- Lower administrative costs

Many healthcare organizations recover their investment through operational savings and improved service delivery within a few years.

Conclusion

Building a HIPAA-compliant EHR system is a significant investment, but it is also a strategic necessity for modern healthcare organizations. Development costs typically range from $50,000 for basic solutions to over $1 million for enterprise-grade platforms with advanced functionality, interoperability, and telehealth capabilities.

The final budget depends on system complexity, compliance requirements, integrations, security measures, and long-term maintenance needs. While costs can seem substantial, the benefits including improved patient care, operational efficiency, regulatory compliance, and long-term ROI make EHR development a valuable investment.

Organizations that partner with experienced healthcare software experts can reduce risks, accelerate deployment, and build scalable solutions that support future growth.

Ready to Build a HIPAA-Compliant EHR Solution?

Whether you’re planning a custom EHR platform, integrating telehealth features, or modernizing legacy healthcare systems, partnering with the right development team can make all the difference. Connect with experienced healthcare technology specialists today to create a secure, scalable, and future-ready EHR solution tailored to your organization’s needs.

FAQs

1. How much does it cost to build a HIPAA-compliant EHR system?

The cost typically ranges from $50,000 to over $1 million, depending on features, integrations, compliance requirements, and system complexity.

2. Why is HIPAA compliance expensive?

HIPAA compliance requires advanced security measures, encryption, audit trails, access controls, compliance testing, and ongoing monitoring, all of which increase development costs.

3. How long does EHR development take?

A basic EHR system may take 4–6 months, while enterprise-grade platforms can require 12–24 months or longer.

4. Can telemedicine features be integrated into an EHR system?

Yes. Modern EHR solutions frequently include telehealth capabilities such as video consultations, secure messaging, remote monitoring, and digital prescriptions.

5. What are the biggest challenges in EHR development?

Common challenges include regulatory compliance, cybersecurity risks, user adoption, interoperability, and ongoing maintenance.

6. Is a cloud-based EHR system more cost-effective?

In many cases, yes. Cloud-based EHR solutions reduce infrastructure expenses, improve scalability, and simplify remote access while maintaining strong security standards.

You can also Read: Cost and Features of Telemedicine App Development